Curate XR

Curate XR

XR experience guidance using real-time LLM-driven conversational agentsProject Info

Start date:

January 2025

End date:

December 2026

Funding:

–

Coordinator:

Artanim

Summary

Imagine yourself inside a museum environment, whether real or entirely virtual. The elegant space is filled with fascinating works of art, some which you immediately recognize, but who was the artist again? And others grab your attention but are entirely unfamiliar. If only someone was around to tell you about the artists behind the works of art or provide you with details on the works themselves. Thankfully a virtual museum guide is in the room with you.

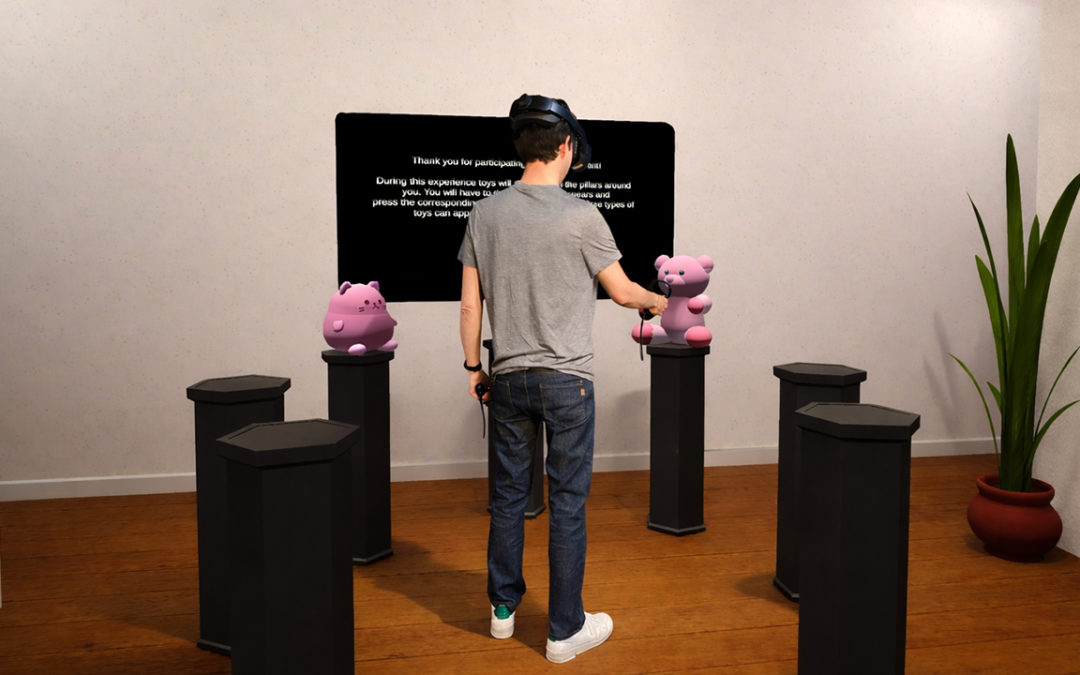

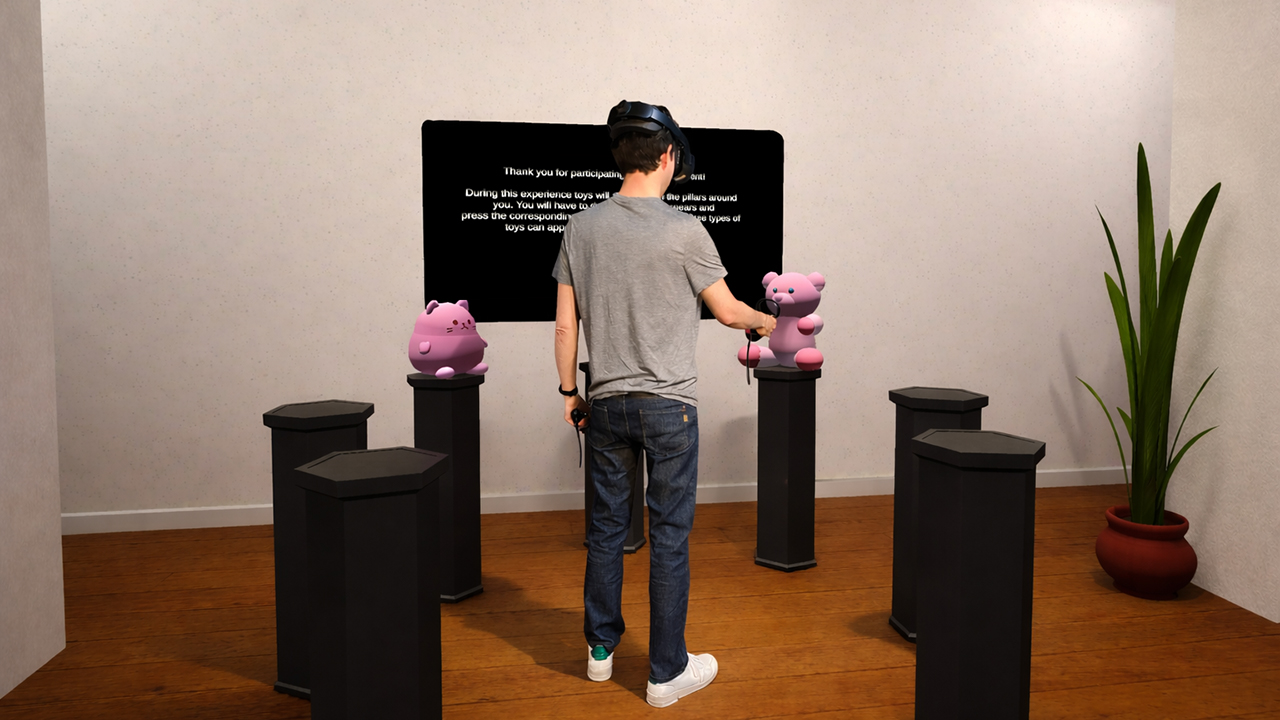

One of the drawbacks of more traditional approaches to such a museum guide scenario is the need to establish all the possible questions and responses up front, quickly leading to intractable production constraints, and an end-result that while informative may feel unnatural or robotic given that it’s entirely predetermined. The goal of our CurateXR project is to study how a modern-day generative AI chatbot backed by a Large Language Model (LLM) can be used to provide a real-time and entirely natural means of interacting with a virtual agent in such virtual (VR) or augmented reality (AR) scenarios. Powered by an animation system making use of Motion Matching and pathfinding to naturally navigate the space, the guide will happily stroll along with you.

All interactions happen through natural speech. Just ask any question you may have, and the guide will reply without delay, taking into consideration the context of the space it has been provided with up front, as well as real-time contextual clues provided to it such as your location and the artwork you’re looking at. Not only will the guide engage in a natural conversation with you, but if you feel more comfortable speaking in another language, just ask him to do so. The use of a generative AI chatbot resolves many issues around the barriers to entry users may feel when interacting with extended reality applications for the first time, and it provides a level of accessibility which is hard to replicate otherwise.

Generative AI chatbots aren’t just limited to conversations. They have the ability to turn your words into concrete actions. To demonstrate this, at one end of the museum space you will find a virtual sculpture experience, controlled by another agent. Through natural speech you are not just able to summon three-dimensional shapes in your desired color, but you can have them move as you desire. And if you’re not satisfied with the results you achieved, there is no need to start from scratch. Just inform your virtual helper about what changes you would like to make, and you’ll see the relevant updates happen before your eyes.

Integrating a generative AI chatbot into your VR scenarios can level up your experience by providing an entirely natural means of engagement. Just put your headset on and go. And while the museum scenario was chosen as the use-case for this project, the potential range of scenarios that can be supported – from training and education to rehabilitation, entertainment and many more – is virtually endless.