by Marlène Arévalo | Apr 20, 2026

Project Info

Client:

Cinzia Fossati & Laurence Moletta

Year:

2026

Summary

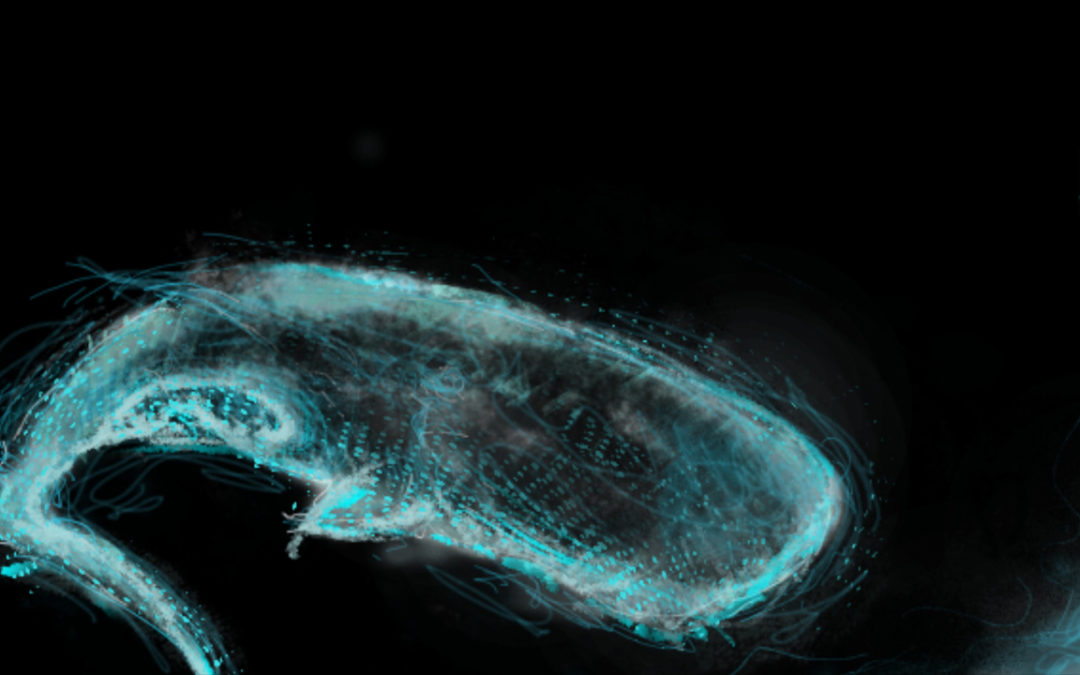

Symphony of the Deep is a multi-user immersive VR experience inviting participants to step into the sensory world of sperm whales through sound, movement, and collective presence.

Conceived by Cinzia Fossati (writer-director) and Laurence Moletta (writer-composer), the project explores new forms of shared perception and embodied interaction in virtual reality. Participants are invited to navigate an underwater environment not through vision alone, but through echolocation, using their own voices to generate acoustic and visual feedback that progressively reveals their surroundings and enables interaction with others.

At the core of this first R&D phase, Artanim is developing a multi-user VR prototype focused on two key challenges: collective locomotion inspired by swimming, and real-time vocal interaction (echolocation) as a means of perception and communication. This approach transforms sound into a central mechanic for navigation, interaction, and storytelling.

At the heart of the experience lies a clear environmental intention: to raise awareness of underwater noise pollution and human impact on marine ecosystems by inviting participants to perceive the ocean through the sensory world of sperm whales.

The project is currently in its initial research and development phase. To learn more and follow its progress, visit: https://immersivelab.ch/

Credits

Director

Cinzia Fossati

Authors

Cinzia Fossati & Laurence Moletta

R&D

Artanim

Sound design & music

Laurence Moletta

Supported by

Centre national du cinéma et de l’image animée (CNC)

Migros Storylab

Cinéforom

Ville de Genève

Etat de Genève

Accompanied by

Laetitia Bochud – Virtual Switzerland

by artanim | Oct 29, 2025

Project Info

Start date:

January 2025

End date:

December 2026

Funding:

–

Coordinator:

Artanim

Summary

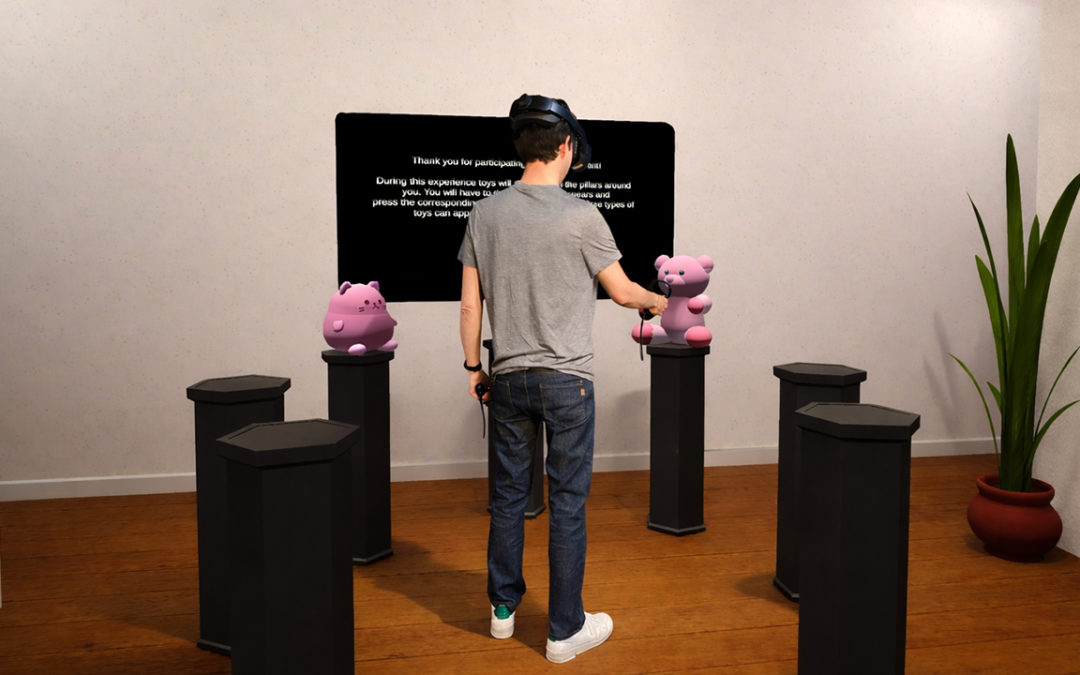

Imagine yourself inside a museum environment, whether real or entirely virtual. The elegant space is filled with fascinating works of art, some which you immediately recognize, but who was the artist again? And others grab your attention but are entirely unfamiliar. If only someone was around to tell you about the artists behind the works of art or provide you with details on the works themselves. Thankfully a virtual museum guide is in the room with you.

One of the drawbacks of more traditional approaches to such a museum guide scenario is the need to establish all the possible questions and responses up front, quickly leading to intractable production constraints, and an end-result that while informative may feel unnatural or robotic given that it’s entirely predetermined. The goal of our CurateXR project is to study how a modern-day generative AI chatbot backed by a Large Language Model (LLM) can be used to provide a real-time and entirely natural means of interacting with a virtual agent in such virtual (VR) or augmented reality (AR) scenarios. Powered by an animation system making use of Motion Matching and pathfinding to naturally navigate the space, the guide will happily stroll along with you.

All interactions happen through natural speech. Just ask any question you may have, and the guide will reply without delay, taking into consideration the context of the space it has been provided with up front, as well as real-time contextual clues provided to it such as your location and the artwork you’re looking at. Not only will the guide engage in a natural conversation with you, but if you feel more comfortable speaking in another language, just ask him to do so. The use of a generative AI chatbot resolves many issues around the barriers to entry users may feel when interacting with extended reality applications for the first time, and it provides a level of accessibility which is hard to replicate otherwise.

Generative AI chatbots aren’t just limited to conversations. They have the ability to turn your words into concrete actions. To demonstrate this, at one end of the museum space you will find a virtual sculpture experience, controlled by another agent. Through natural speech you are not just able to summon three-dimensional shapes in your desired color, but you can have them move as you desire. And if you’re not satisfied with the results you achieved, there is no need to start from scratch. Just inform your virtual helper about what changes you would like to make, and you’ll see the relevant updates happen before your eyes.

Integrating a generative AI chatbot into your VR scenarios can level up your experience by providing an entirely natural means of engagement. Just put your headset on and go. And while the museum scenario was chosen as the use-case for this project, the potential range of scenarios that can be supported – from training and education to rehabilitation, entertainment and many more – is virtually endless.

by artanim | Jul 16, 2025

Project Info

Producer:

Dreamscape Immersive / Artanim

Year:

2025

Summary

Your Dreamscape experience is riddled with bugs! These dreadful digital pests might very well eat through everything they see, and you’re the only one standing in their way. Hang on to your speedster as you launch through the rifts at full speed, and explore many corrupted worlds! Set a course for the asteroid field, join forces in a post-apocalyptic desert… and above all, stay alert: a well-deserved break could be the perfect grounds for an ambush. Keep your lasers hot, aim for the highest score, and DEBUG. THIS. MESS!

In this new opus, Artanim collaborated with the team of Dreamscape Immersive to create a new free-flying VR immersive experience combining a subtle balanced mix of storytelling and gaming with a splash of humor.

Permanently exhibited at the Dreamscape center in Geneva, level-1 of Confederation Center until December 28th, 2025.

More information on the VR technology here.

Credits

Production, project direction, scenario, 3D content creation, gameplay and VR platform

Dreamscape Immersive / Artanim

Music

Alain Renaud

Voice actor

Adrien Buensod

by Marlène Arévalo | Jan 22, 2025

Project Info

Client:

Alan Bogona

Year:

2024

Summary

The experimental video project Being Laser – Restless Limbs explores the interactions between light, human body, and technology. Synthesizing speculative research that combines contemporary utopias and ancient archetypes related to artificial lighting, it highlights the cross-influences between Western and East Asian cultures. This project combines computer-generated animated sequences (CGI) with real footage in China, Switzerland, and Italy. The animated sequences feature ethereal, luminous, and ever-changing avatars, brought to life using motion capture techniques.

For this project, we recorded two body-flying performers in a vertical wind tunnel with our Xsens inertial suit, and captured dance movements in the studio using our Vicon motion capture system.

Credits

Indoor skydiving performers

Benjamin Guex, Olivier Longchamp (RealFly Sion)

Butoh dancer

Flavia Ghisalberti

Motion capture

Artanim

Support by

Pro Helvetia